Robust Decision Making: better decisions under uncertainty

Colombofabio (Talk | contribs) |

Colombofabio (Talk | contribs) |

||

| Line 12: | Line 12: | ||

| − | [[File:Speedometer uncertainty.jpg | + | [[File:Speedometer uncertainty.jpg |right|Figure 1: Uncertainty spectrum]] |

According to the "monitor and adapt" paradigm, RDM refers to a collection of ideas, procedures, and supportive technologies intended to rethink the function of quantitative models and data in guiding choices in situations affected by uncertainty. Models and data become tools for systematically exploring the consequences of assumptions, expanding the range of futures considered, creating innovative new responses to threats and opportunities, and sorting through a variety of scenarios, options, objectives, and problem framings to identify the most crucial trade-offs confronting decision makers. This is in contrast to the traditional view of models as tools for prediction and the subsequent prescriptive ranking of decision options. This means that, rather than improving forecasts, models and data are used to facilitate decision makers in taking robust decisions <ref name="Popper"/>. As argued by Marchau et. al., robustness of decisions is, therefore, guaranteed by iterating several times the solution to a problem while straining the suggested decisions against a wide variety of potential scenarios. In doing so, RDM endure the decision making process under deep uncertainty <ref name="Lempert RDM"/>. | According to the "monitor and adapt" paradigm, RDM refers to a collection of ideas, procedures, and supportive technologies intended to rethink the function of quantitative models and data in guiding choices in situations affected by uncertainty. Models and data become tools for systematically exploring the consequences of assumptions, expanding the range of futures considered, creating innovative new responses to threats and opportunities, and sorting through a variety of scenarios, options, objectives, and problem framings to identify the most crucial trade-offs confronting decision makers. This is in contrast to the traditional view of models as tools for prediction and the subsequent prescriptive ranking of decision options. This means that, rather than improving forecasts, models and data are used to facilitate decision makers in taking robust decisions <ref name="Popper"/>. As argued by Marchau et. al., robustness of decisions is, therefore, guaranteed by iterating several times the solution to a problem while straining the suggested decisions against a wide variety of potential scenarios. In doing so, RDM endure the decision making process under deep uncertainty <ref name="Lempert RDM"/>. | ||

Revision as of 10:06, 18 February 2023

Contents |

Abstract

Robust Decision Making (RDM) involves a set of ideas, methods, and tools that employ computation to facilitate better decision-making when dealing with situations of significant uncertainty. It integrates Decision Analysis, Assumption-Based Planning, Scenario Analysis, and Exploratory Modeling to simulate multiple possible outcomes in the future, with the aim of identifying policy-relevant scenarios and robust adaptive strategies. These RDM analytic tools are frequently embedded in a decision support process referred to as "deliberation with analysis," which fosters learning and agreement among stakeholders [1]. This article provides a review of the current state of the art in RDM in project management, including the key principles and practices of RDM, such as the importance of data gathering and analysis, considering different options, and involving stakeholders. Furthermore, this article examines the benefits, challenges, and limitations of RDM in project management and provides insights into future directions for research in this area. Its aim is to provide project managers with a deeper understanding of the principles and practices of RDM, along with insights on and example of how to correctly implement RDM in project management. Ultimately, this article aims to contribute to the development of more effective and efficient approaches to project management and decision making by promoting the use of RDM in project management.

Big Idea: Robust Decision Making under uncertainty

Brief history

Robust Decision Making (RDM) emerged in the 1950s and 1960s, when the RAND Corporation developed it to evaluate the effectiveness of nuclear weapon systems [2] [3]. The approach was designed to address uncertainty and ambiguity inherent in strategic planning, and it evolved to include simulation techniques, sensitivity analysis, and real options analysis. In the 1990s and 2000s, as the complexity and uncertainty of projects increased, RDM gained wider acceptance in project management and was applied to fields such as infrastructure, software development, and environmental management. Today, RDM is an established approach in project management, recognized for its ability to help project managers make well-informed and confident decisions, anticipate and manage uncertainty, and continuously adapt and monitor. RDM has also been applied in various contexts beyond project management, such as climate change policy and disaster risk reduction [4] [5] [6] [7].

Literature review

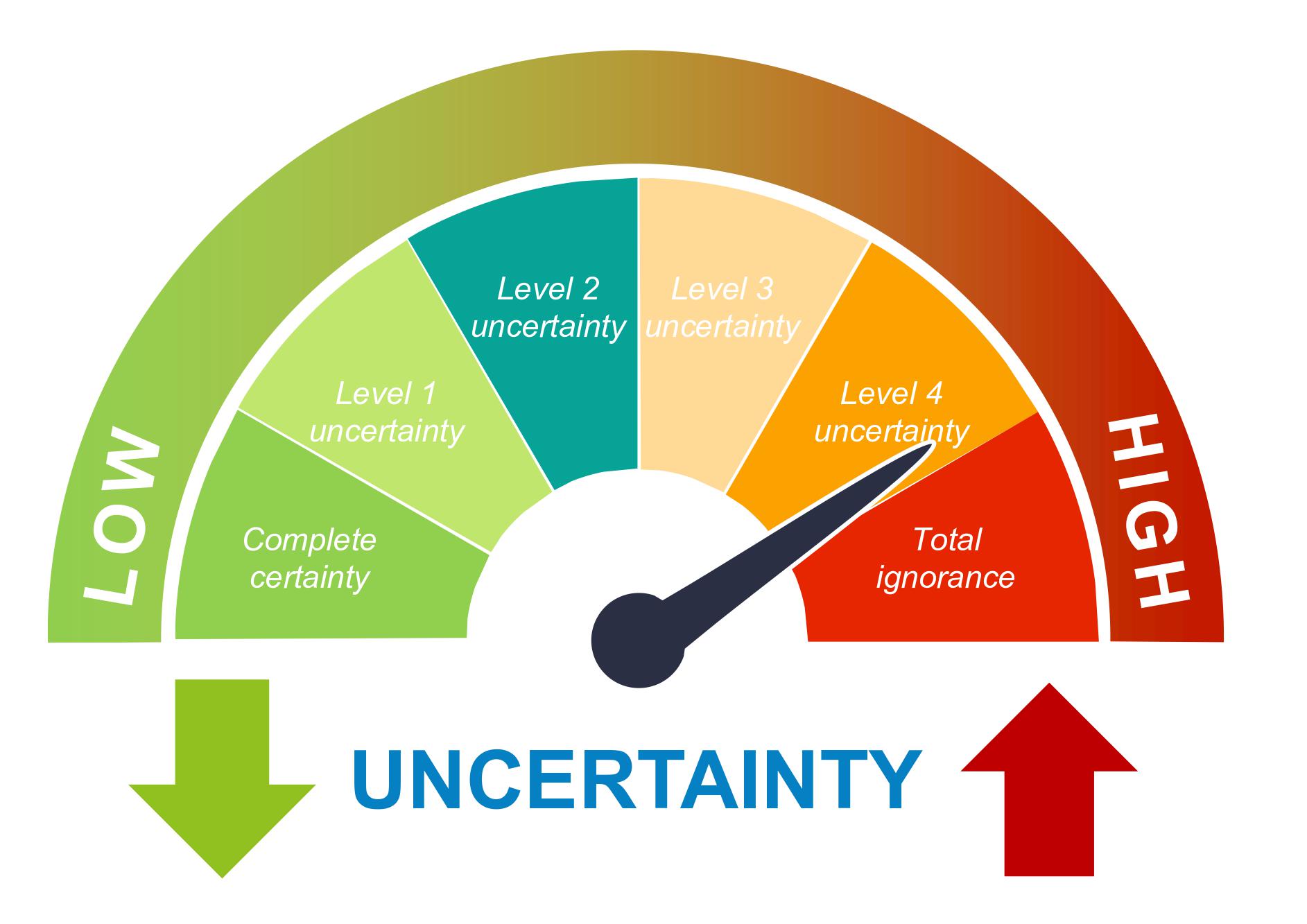

According to Donald Rumsfeld there are different types of knowledge: known knowns, known unknowns, and unknown unknowns. Known knowns refer to things that we know for sure. Known unknowns refer to things that we know we do not know. However, the most challenging category is the unknown unknowns, which refers to things that we do not know we do not know [8] [9]. Knight further elaborates and proposes a distinction between risk and uncertainty. The former indicates situations in which the unknown can be measured (through probabilities) and, therefore, controlled. The latter indicates situations in which the unknown can't be quantified and can't, therefore, be measured [10]. Based on Knight distinction, academics differentiated the various levels of uncertainty in decision-making, ranging from complete certainty to total ignorance [11] [12] [3]. These levels are categorized based on the knowledge assumed about various aspects of a problem, including the future state of the world, the model of the relevant system, the outcomes from the system, and the weights that the various stakeholders will put on the outcomes. The four intermediate levels of uncertainty are defined as Level 1, where historical data can be used as predictors of the future [13]; Level 2, where probability and statistics can be used to solve problems; Level 3, where plausible future worlds are specified through scenario analysis; and Level 4, where the decision maker only knows that nothing can be known due to unpredictable events or lack of knowledge or data [14] [15]. It is believed that with issues dealing with a greater level of uncertainty (Level 4), a more sophisticated and in-depth data gathering is not helpful. The decision making process in such situations is defined as decision making under deep uncertainty (DMDU) [3]. Instead of a "predict and act" paradigm, which attempts to anticipate the future and take action on that prediction, DMDU approaches are based on a "monitor and adapt" paradigm, which aims to prepare for unknown occurrences and adjust accordingly [12]. In order to make decisions for unpredictable occurrences and long-term changes, this "monitor and adapt" paradigm "explicitly identifies the deep uncertainty surrounding decision making and underlines the necessity to take this deep uncertainty into consideration." ([1], p. 11) This article explores RDM under uncertainty, an approach dwelling under the realm of DMDU methodologies.

According to the "monitor and adapt" paradigm, RDM refers to a collection of ideas, procedures, and supportive technologies intended to rethink the function of quantitative models and data in guiding choices in situations affected by uncertainty. Models and data become tools for systematically exploring the consequences of assumptions, expanding the range of futures considered, creating innovative new responses to threats and opportunities, and sorting through a variety of scenarios, options, objectives, and problem framings to identify the most crucial trade-offs confronting decision makers. This is in contrast to the traditional view of models as tools for prediction and the subsequent prescriptive ranking of decision options. This means that, rather than improving forecasts, models and data are used to facilitate decision makers in taking robust decisions [16]. As argued by Marchau et. al., robustness of decisions is, therefore, guaranteed by iterating several times the solution to a problem while straining the suggested decisions against a wide variety of potential scenarios. In doing so, RDM endure the decision making process under deep uncertainty [3].

Although in the literature several examples of practical applications of RDM in project management can be found, the theoretical support of the application of this framework in project management practices is still poor. The remainder of the article will, therefore, concentrate on the fundamental principles of RDM, guide the reader through the methodology, give an illustration of how RDM has been successfully used in a large-scale project, and discuss benefits and limitation of the approach.

Foundations of Robust Decision Making

RDM finds its grounds in four key notions, from which it both takes some legacy, and offers a fresh expression. These are Decision Analysis, Assumption-Based Planning, Scenario Analysis, and Exploratory Modeling.

Decision Analysis (DA)

The discipline of DA provides a framework for creating and utilizing well-structured decision aids. RDM exploits this framework, but focuses specifically on finding trade-offs and describing vulnerabilities to create robust decisions based on stress testing of probable future routes. Both DA and RDM seek to improve the decision-making process by being clear about goals, utilizing the finest information available, carefully weighing trade-offs, and adhering to established standards and conventions to assure legitimacy for all parties involved. However, while DA seeks optimality through utility frameworks and assumptions [17], RDM seeks robustness assuming uncertainty as deep, probabilities as imprecise [18], and highlighting trade-offs between plausible options.

Assumption-Based Planning (ABP)

By expanding awareness of how and why things could fail, RDM uses the ideas of stress testing and red teaming to lessen the harmful impacts of overconfidence in current plans and processes [19]. This approach was first implemented in the so-called Assumption-Based Planning (ABP) framework. Starting with a written version of an organization's plans, ABP finds the explicit and implicit assumptions made during the formulation of that plan that, if untrue, would result in its failure. These sensitive assumptions can be identified by planners, who can then create backup plans and "hedging" strategies in case the others start to crumble. ABP takes, then, into account "signposts", which refers to monitoring patterns and events to spot any faltering presumptions [1].

Scenario Analysis (SA)

In order to deal with deep uncertainty, RDM builds upon the idea of SA [20]. Scenarios are defined as collections of potential future occurrences that illustrate various worldviews without explicitly assigning a relative likelihood score [21]. They are frequently employed in deliberative processes involving stakeholders and not including probabilities of occurrence. This is done with the objective of broadening the range of futures taken into consideration and to communicating a wide variety of futures to audiences. As a legacy from SA, RDM divides knowledge about the future into a limited number of unique situations to aid in the exploration and communication of profound ambiguity.

Exploratory Modeling (EM)

According to Bankes, it is Exploratory Modeling (EM) the tool that allows the integration of DA, ABP, and SA in RDM [22]. Without prioritizing one set of assumptions over another, EM maps a wide range of assumptions onto its results. This means that EM is strongly beneficial when a single model cannot be validated because of a lack of evidence, insufficient or conflicting ideas, or unknown futures. Therefore, by lowering the demands for analytic tractability on the models employed in the study, EM offers a quantitative framework for stress testing and scenario analysis and allows the exploration of futures and strategies. As EM favors no base case or one future as an anchor point, it allows for genuinely global sensitivity studies.

Application

Methodology

Outline:

- Decision framing

- strategy evaluation

- vulnerability analysis

- tradeoffs analysis

- new futures and strategies

Example: Application to Water Planning and Climate Policy

Outline:

- Brief introduction to the project with description of goal and complexity

- Presentation of why and how RDM was implemented

- Conclusion and take-aways

Benefits & Limitations

Benefits

Limitations

Conclusion

- Brief summary of the article (very short)

- Why PM should consider RDM

- What are the challenges and limitations they will encounter in doing so

Other things:

- RDM tests predictions about the future and strategic moves using an iterative human-computer approach. Consideration of several likely outcomes, the pursuit of robust techniques, the employment of adaptable strategies, and the use of computers to support human deliberation are the main points of the methodology.

Further research

References

- ↑ 1.0 1.1 1.2 Vincent A. W. J. Marchau, Warren E. Walker, Pieter J. T. M. Bloemen, Steven W. Popper (2019). Decision Making under Deep Uncertainty. From Theory to Practice

- ↑ https://www.rand.org/pardee/methods-and-tools/robust-decision-making.html

- ↑ 3.0 3.1 3.2 3.3 Lempert, R., J. (2019). Robust Decision Making (RDM), in Decision Making Under Deep Uncertainty — 2019, pp. 23-51

- ↑ Lempert, R. J., & Collins, M. T. (2007). Managing the risk of uncertain threshold responses: Comparison of robust, optimum, and precautionary approaches. Risk Analysis, 27(4), 1009-1026.

- ↑ Ramanathan, R., & Ganesh, L. S. (1994). Group preference aggregation methods employed in AHP: An evaluation and an intrinsic process for deriving members’ weightages. European Journal of Operational Research, 79(2), 249-265.

- ↑ Whang, J., & Han, S. (2009). Optimal R&D investment strategies under uncertainty for the development of new technologies. Journal of Business Research, 62(4), 441-447.

- ↑ Xu, Q., Zhang, L., & Zhang, X. (2013). The application of robust decision-making in the emergency evacuation of large-scale events. Safety Science, 57, 141-146.

- ↑ Donald Rumsfeld, Department of Defense News Briefing, February 12, 2002.

- ↑ "The Johari Window", http://wiki.doing-projects.org/index.php/The_Johari_Window, 27 February 2021

- ↑ Knight, F. H. (1921). Risk, uncertainty and profit. New York: Houghton Mifflin Company (repub- lished in 2006 by Dover Publications, Inc., Mineola, N.Y.).

- ↑ Courtney, H. (2001). 20/20 foresight: Crafting strategy in an uncertain world. Boston: Harvard Business School Press.

- ↑ 12.0 12.1 Walker, W.E., Marchau, V.A.W.J., Kwakkel, J.H., 2013. Uncertainty in the framework of policy analysis, in: Walker, W.E., Thissen, W.A.H. (Eds.), Public Policy Analysis: New Developments.

- ↑ Hillier, F. S., & Lieberman, G. J. (2001). Introduction to operations research (7th ed.). New York: McGraw Hill.

- ↑ Taleb, N. N. (2007). The black swan: The impact of the highly improbable. New York: Random House.

- ↑ Schwartz, P. (1996). The art of the long view: Paths to strategic insight for yourself and your company. New York: Currency Doubleday.

- ↑ Popper, S. W., Lempert, R. J., & Bankes, S. C. (2005). Shaping the future. Scientific American, 292(4), 66–71.

- ↑ Morgan, M. G., & Henrion, M. (1990). Uncertainty: A guide to dealing with uncertainty in quantitative risk and policy analysis. Cambridge, UK: Cambridge University Press.

- ↑ Walley, P. (1991). Statistical reasoning with imprecise probabilities. London: Chapman and Hall.

- ↑ Dewar, J. A., Builder, C. H., Hix, W. M., & Levin, M. H. (1993). Assumption-based planning—A planning tool for very uncertain times. Santa Monica, CA, RAND Corporation. https://www. rand.org/pubs/monograph_reports/MR114.html. Retrieved July 20, 2018.

- ↑ Lempert, R. J., Popper, S. W., & Bankes, S. C. (2003). Shaping the Next One Hundred Years: New Methods for Quantitative, Long-term Policy Analysis. Santa Monica, CA, RAND Corporation, MR-1626-RPC.

- ↑ Wack, P. (1985). The gentle art of reperceiving—scenarios: Uncharted waters ahead (part 1 of a two-part article). Harvard Business Review (September–October): 73–89.

- ↑ Bankes, S. C. (1993). Exploratory modeling for policy analysis. Operations Research, 41(3), 435–449.